We have already looked at a very simple dynamic level solution example using two tables. Now We move to dynamic security where other users still have full access

At this point it should be noted that I am the Admin of the App Workspace

This means that I can see everything at dynamic level.

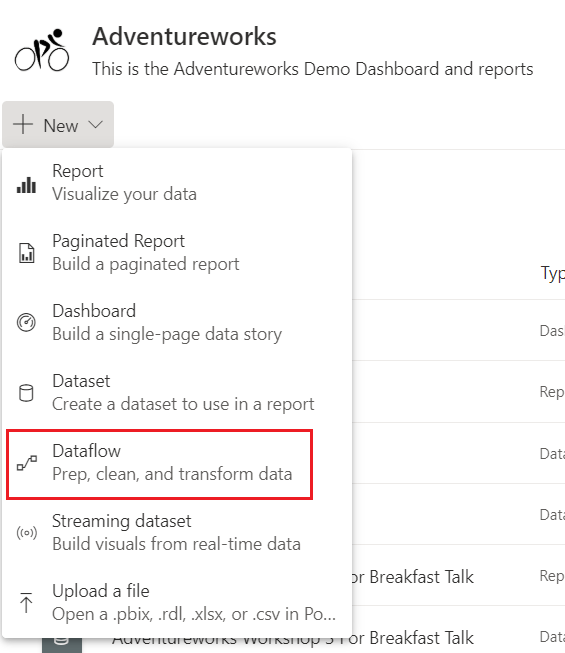

Create a New report

for this example, a brand new App workspace Area and report is created

2 tables are created in Power BI to test

Employee Table

Sales table

Add a Manager Role

Notice the isManager column within the Employee table. It is set to 1 for the manager and 0 for everyone else

Manage Relationships

We now need to amend the Employee role slightly that we set up previously within Manage Roles.. This is our previous Logic

the following code can be added to Table filter DAX expression. We will look at this in more detail.

IF(

MAXX(

Filter(

'Employee',

'Employee'[Email]=USERPRINCIPALNAME())

,'Employee'[IsManager]) = 0,

'Employee'[Email]=USERPRINCIPALNAME(),

1=1

)

In order to get an understanding of the DAX lets go to the inner DAX and move outwards.

Filter: Allows you to manipulate data context to create dynamic calculations. Which means that it returns a table that has already been filtered.

In this case the <table> to be filtered is the Iterator. the <filter> expression is a true or false boolean that gets evaluated for each row of the table. this is the Row Context . in this case is it true or false that the email in the data is equal to the email currently set within UserName()

FILTER is not used independently, but as a function that is embedded in other functions that require a table as an argument.

MaXX: Evaluates an Expression for each row and returns the largest numeric value. So it takes the result of the filter (the whole row from the table object) and Brings back the Max number.

So in this case, we can create a quick measure to understand what it is doing using my email address hardcoded into the measure

Maxx Measure check = MAXX(FILTER(DimEmployee,DimEmployee[EmailAddress]=”Debbie.Edwards@emailaddress.com”),DimEmployee[isManager])

I am not the manager, so in this instance our Maxx Iterator comes back with 0. It is not true

If: the results of the MAXX is 0 (Not true) then we just show the data related to that person

ELSE: If the User is a manager then show everything. This DAX expression is a simple way of dispaying everything

You could also use this DAX expression

if(

LOOKUPVALUE(‘Employee'[IsManager],’Sales Rep'[Email],USERPRINCIPALNAME())=1,

true(),

‘Employee'[Email] = USERNAME()

)

LOOKUPVALUE

LOOKUPVALUE( <result_columnName>, <search_columnName>, <search_value>[, <search_columnName>, <search_value>]…[, <alternateResult>])

- result_columnName is an existing column you want to return. [IsManager]

- search_columnName is the column where the lookup gets performed [Email]

- search_value is related to the actual value you are searching for. Not a column. USERPRINCIPALNAME()

Brings back IsManager where the userprincipalname is the current Email address

Go back to Modelling and Manage roles. This is currently set to [EmailAddress] = USERNAME() but we are going to replace with the above IF

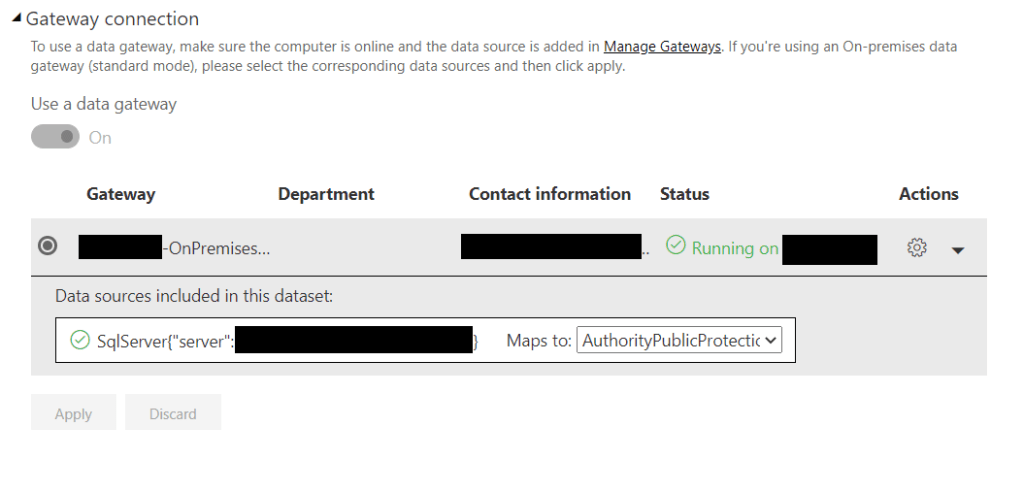

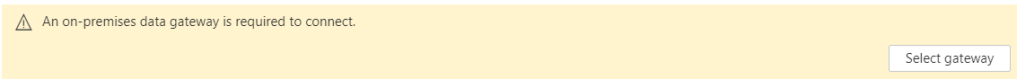

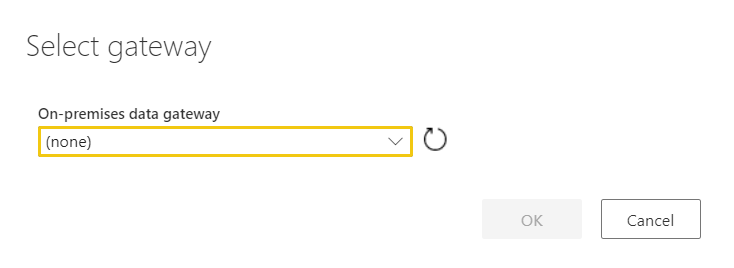

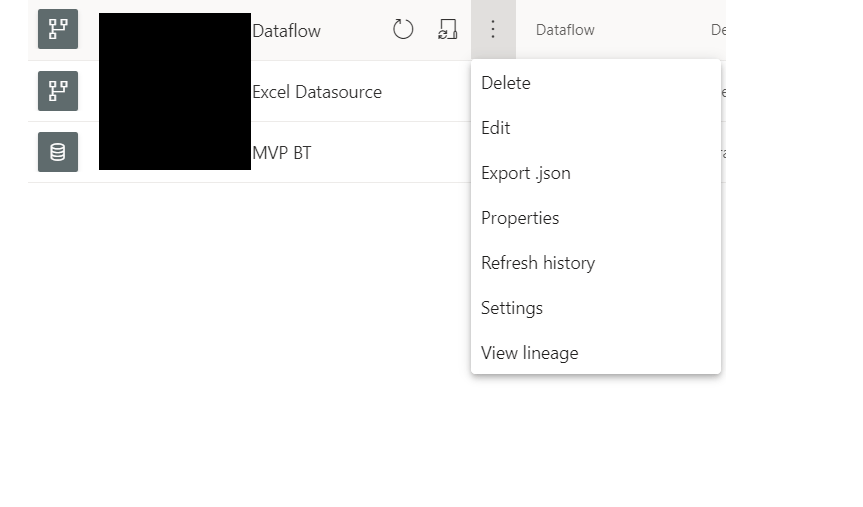

Republish and go back to Service

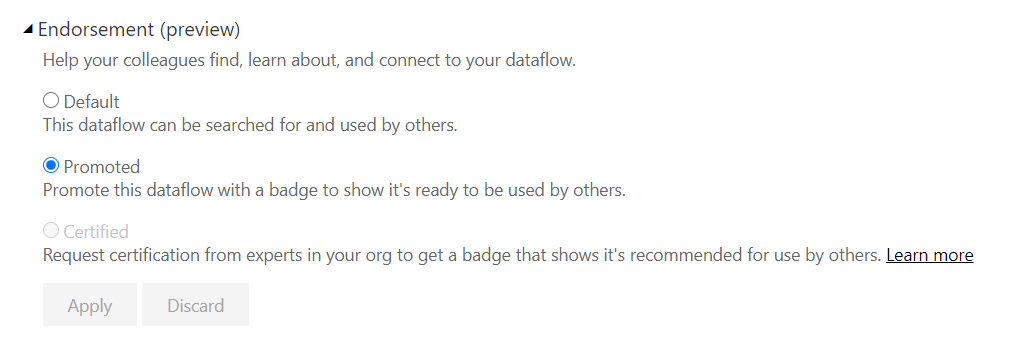

Back in Datasets > Security Add your emails as a role and save

Again you can test as role from here.

Test one

In this case:

User 1 is Admin and can see all the rows

User 2

Just the one row of data is displayed for user 2

User 3

User 3 sees all the records because of the IsManager Flag

Conclusion

If you publish the workspace as an app, Row level security will work for all end users, except for Admins, Members and Contributors in the workspace. These three roles can see all data, whether in the workspace or app.

for this, My user 1 and User 2 would be added into the App and then they can see the reports with Row Level Security in Place

Both Static and Dynamic Role level security need a fair amount of maintenance.

Another security logic area that need to be looked at in more detail is when you want users to see everything in the top level report, but they can only drill down on the detail if they are allowed to see the detail.