Dataflows allow you to separate your data transformations from your data sets. we can now centralise the dataflow and connect to the flow in power BI Desktop instead of having to connect to the data.

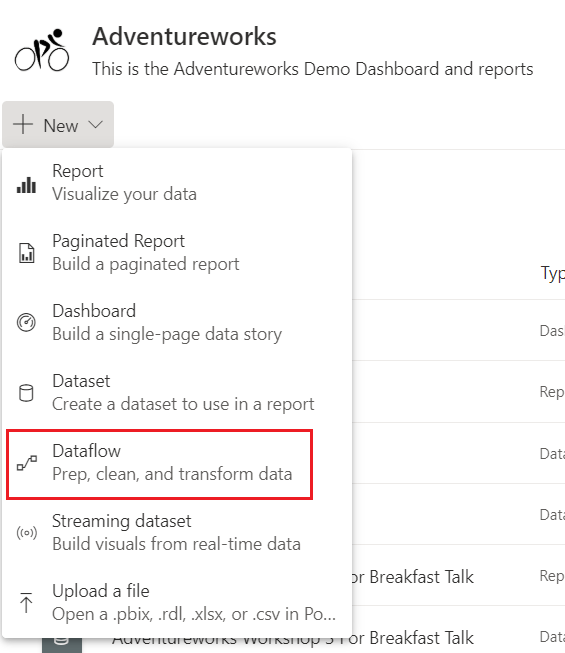

to create a dataflow, instead of getting data within the Power BI desktop pbix file, you create a dataflow in Power BI Service

This this takes you to Power Query Editor within the Service, where you can do all your transformations.

Dataflows are held in the gen2 data lake. See Use Data Lake Storage V2 as Dataflow Storage which gives more information on setting up your own data lake as storage.

This is a great feature and goes along way to providing us with our Modern Enterprise Data Experience.

there is only ever one owner of a dataflow, which means that if you are not the owner you cannot work on the dataflow. Thank fully there is now a Take Over Option.

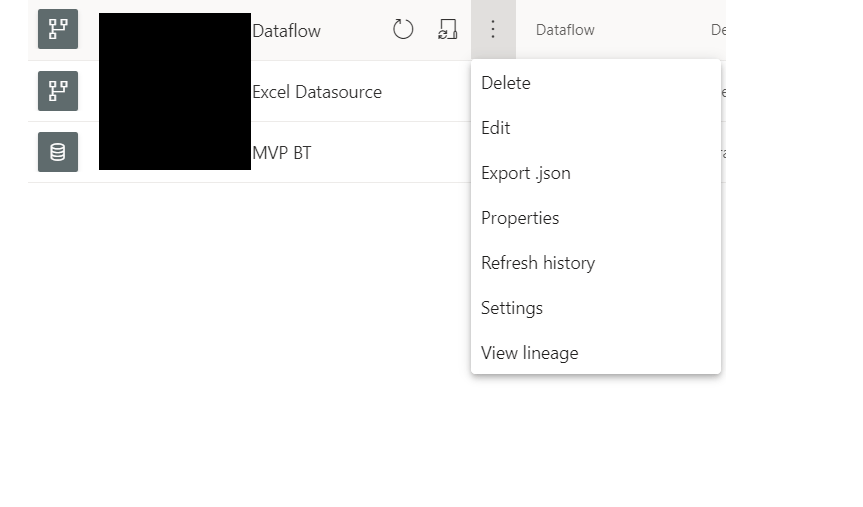

If you are not the owner and you are a Workspace admins or member you can take over ownership to work on the flow by going to

Settings(In the top menu bar)> Settings

And then to dataflows. If you arent the owner you will see the takeover button that allows you to work on the dataflow.

This is great but we did have some questions

If the Initial owner added the Credentials to the Data Source to create the dataflow, does every Owner need to also add in the credentials?

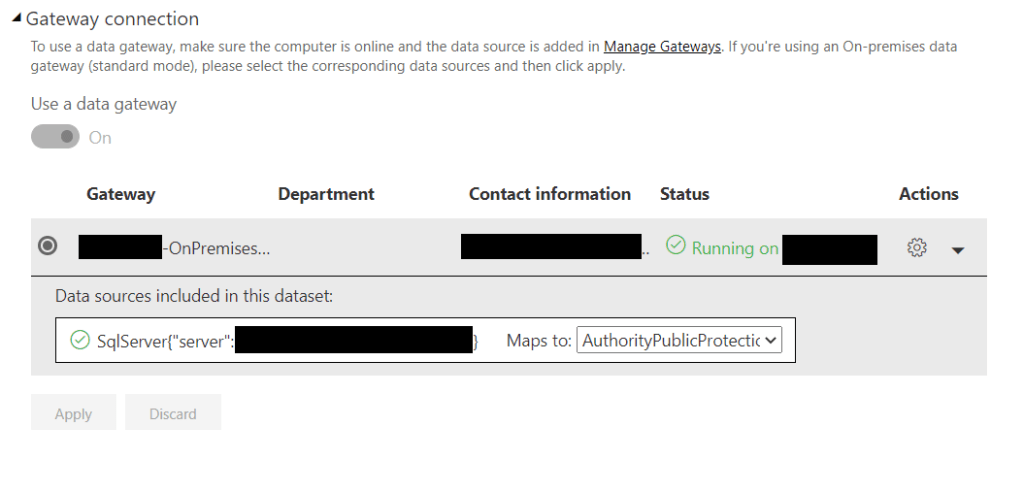

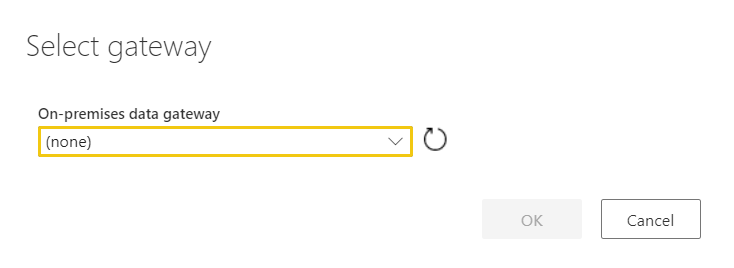

From our Testing, when you take over the Data flow, you need to reset the Gateway, but you dont have to worry about the credentials.

This happens every time you take over the data flow

Dataflows connected to on premises data sources. How do we use the Gatway?

- You cant use the Personal model of Gateway with a dataflow. You must use the Enterprise Gateway

- You must have a Power BI Pro License to use the Gateway

- The connections use the authentication and user information input into the data source configuration. When using takeover, these need to be re established.

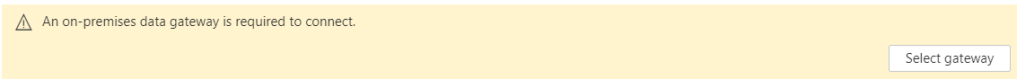

There are three developers working on a solution. Two have been at the company for a while and have no issues accessing the dataflow. However, when I take over the data flow and get to the Power Query Editor in Service I hit the following error

Clearly I, as yet don’t have access to the Enterprise Gateway

Gateway checklist

- Installing the Gateway (You can download the Gateway from Power BI Service)

In this Example, the Gateway has been installed.

- Step 1 is to Install it on a server.

2. Sign into your Power BI Account to configure the gateway

- Once installed it needs to be configured. In Power BI Service, Settings, Manage Gateways

- Decide upon a name and a recovery key (And make sure you don’t lose the key)

3 Add Data Sources to the Gateway

- Server Name

- Database Name

- User Name

- Password

- Security or Authentication methods

- Advanced setting specific to just that data source type

- Again, because Laura can access the data source, this has all clearly been set up. Server ds2chl261 Database AppDB

4. Tell the Gateway who can access the data source.

- Click the Users Table and add the users of the data source.

5. Amending the Gateway

- Again you go into Settings and Manage Gateways

- Choose your the Data source ( Server and Database ) and go to the users Tab to add Users

- In the above example, the gateway is being amended by adding a new user.

Once in place, the user will simply be able to select the Gateway as above.

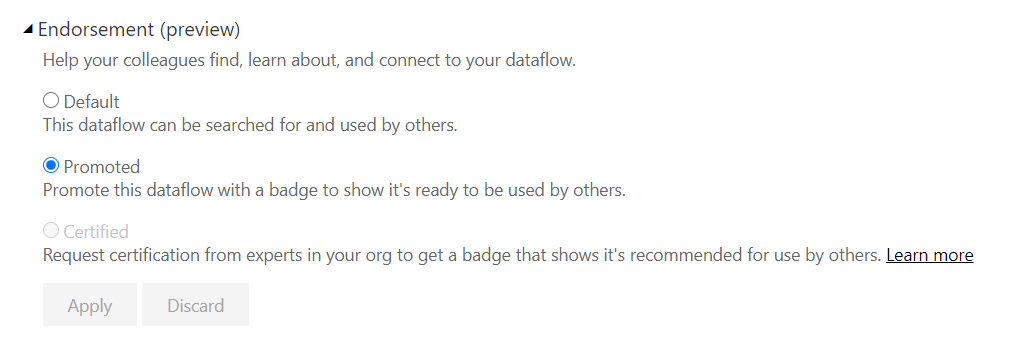

Preview of Promoted Dataflows

You can now as of June 2020, set your dataflow to promoted to ensure everyone knows that this a dataflow that has been created and managed centrally, possibly by your BI team. It will eventually give you your ‘one view of the truth’

Go to Settings for your dataflow

And Promote the dataflow in the same was as you can with a dataset.

Only do this if its established that this is now centrally managed rather than self service data mashup

Note that certified isn’t yet enabled because this is done by your Power BI Admin