Take this data as an example

- ID Total

- 99 1

- 99 1

- 101 1

- 101 1

- 101 1

- 333 1

- 333 1

- 333 1

- 333 1

- 635 1

- 635 1

- 635 1

- 635 1

If you do the usual measure SUM(Total) you would get

- ID Total

- 99 2

- 101 3

- 333 4

- 635 4

But I don’t want the visual to show the above. I want the number of occurences on the axis and then the number of IDs within that occurence as the value

You cant use a measure on the axis, they can only be used as values. How you you solve this problem?

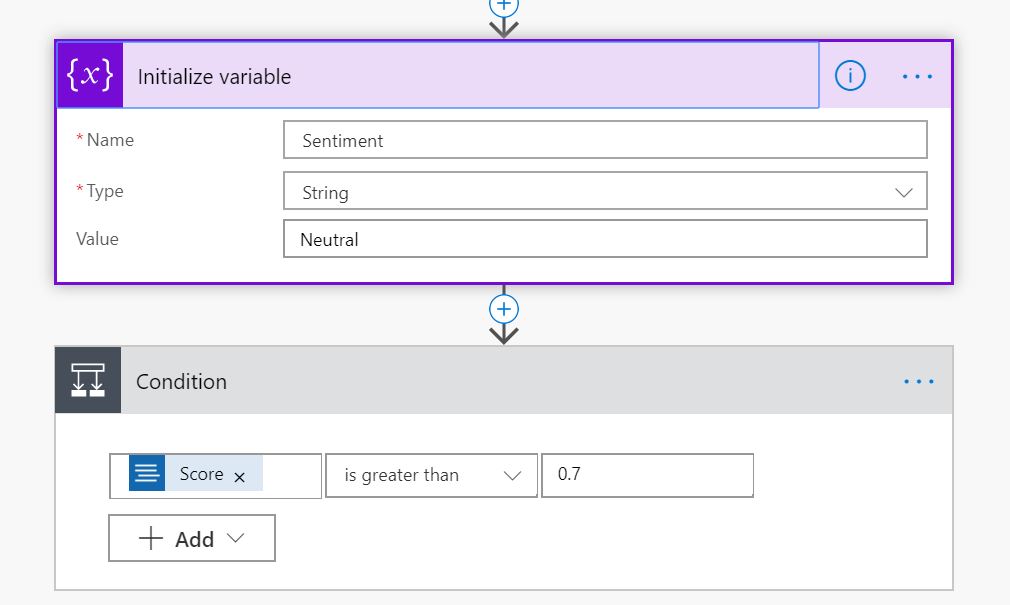

Go to Modelling and New Table

SummaryTable =

SUMMARIZE (

'fact Fact',

'fact Fact'[ID],

"Frequency", COUNT ( 'fact Fact'[ID]))

SUMMARIZE Returns a summary table for the requested totals over a set of groups. In this case, Our group is the IDs.

For the Summary we are simply counting our IDs as the Frequency

Next, Add a new Table

PotentialFreqs = GENERATESERIES ( MIN (SummaryTable[Frequency] ),max('SummaryTable'[Frequency]), 1 )

This creates a list of Frequencies starting at 1 and Ending at our Max Frequency. there is is issue here. we have a lot of NULL Values that creates a large Frequency number that we don’t want in our series of frequencies. this means we have values from 1 to 98 and there should only be values 1 to 4. How do we remove these values?

SummaryTable =

SUMMARIZE (

'fact Fact',

'fact Fact'[ID],

"Frequency", IF(

'fact Fact'[ID]

<>"0",COUNT

('fact Fact'[ID]))

)

Note the addition of the IF statement. If the ID is not zero then count the IDs. Else do nothing

Create relationships

In order to use the new tables we need to create relationships between them

the Summary table is joined to the fact table by the ID (Business key) within the fact table

PotentialFreqs is joined to the SummaryTable via Value to Frequency

the first visual to try this out is a stacked bar chart. Value from PotentialFreqs table is in the Axis and Frequency from the Summary Table is used as the Value.

So we can see that 33 of our IDs have 3 records in the table.

the PotentialFreqs table is really useful if you want to plot your data in a histogram as it gives you a continuous type to plot against rather than categorical

In conclusion, if you need to use a measure on an Axis rather than a value, Create a summary table and then join to the main tables in your model

Update

Unfortunatly the above solution doesnt quite work because I want to base my metrics against each year. sometimes the IDs are split into Years. I will be looking at this later.